As a data infrastructure architect, you aren’t just looking for “a place to put bits.” You’re looking for a foundation that can handle the IOPS-hungry demands of modern AI clusters, high-speed databases, and real-time analytics—all without blowing the budget on “provisioned performance” taxes.

Cloud block storage is a persistent storage architecture that presents raw block-level volumes to virtual machines, Kubernetes clusters, or bare-metal systems over a network. It has evolved from a simple virtual disk into a sophisticated software-defined ecosystem. This guide evaluates the current landscape, breaks down performance metrics, and explores why software-defined architectures like Lightbits are redefining what’s possible in the cloud.

What Are the Best Cloud Block Storage Solutions Available Today?

The “best” solution depends entirely on your specific workload, but the market is currently divided into two primary categories: Native CSP offerings and Software-Defined Storage (SDS).

| Feature | AWS io2 Block Express | Azure Ultra Disk | Portworx | Lightbits |

|---|---|---|---|---|

| Architecture | Managed cloud disk | Managed cloud disk | Kubernetes-native SDS | NVMe/TCP-direct SDS |

| Typical Latency | 500µs–2ms | ~1ms | Depends on backend | Sub-200µs |

| Performance Scaling | Provisioned IOPs | Provisioned IOPs | Node dependent | Linear scale-out |

| Multi-Cloud | No | No | Partial | Yes. Portable license across cloud platforms. |

| Cost Model | Provisioned IOPs | Provisioned IOPs | License-based | Capacity-centric |

While native solutions offer convenience, Lightbits is designed for environments that require consistent, low-latency, scalable NVMe/TCP performance across cloud infrastructure, with sub-millisecond latency and high throughput, without the exorbitant costs typically associated with high-tier native cloud volumes.

How Do You Measure Cloud Block Storage Performance?

If you’re still just looking at max IOPS, you’re only seeing half the picture. To truly evaluate a block storage solution, you must measure the four pillars of throughput:

- IOPS: Critical for small-block database workloads.

- Throughput: Vital for large-block sequential workloads like streaming analytics or backup.

- Latency: Measured in microseconds. For architects, tail latency is more important than average latency, as it determines the consistency of application performance and directly impacts SLAs. Average latency alone is misleading in distributed cloud storage systems. Architects should evaluate p95 and p99 latency because occasional latency spikes can severely impact transactional databases, Kubernetes stateful workloads, and AI inference pipelines.

- Queue Depth: How many I/O requests the storage can handle concurrently before latency begins to spike.

Most enterprise architects benchmark cloud block storage using tools such as fio or vdbench across multiple block sizes (4K, 16K, 64K) and mixed workloads to evaluate real-world consistency under sustained load.

What is Low-Latency Block Storage and Why Does It Matter?

Low-latency block storage refers to storage systems capable of responding to I/O requests in the sub-200-microsecond range. In cloud-native environments, latency is introduced not only by media access time, but also by virtualization layers, network transport overhead, storage controller queues, and synchronous replication coordination.

local NVMe ≠ scalable

centralized SAN ≠ low latency

NVMe/TCP SDS balances both

Why it matters: In a distributed system, storage latency is often the primary bottleneck for CPU efficiency. When a database waits for a write acknowledgment, CPU cycles are wasted.

By reducing latency, you:

- Increase Transaction Volume: Databases such as PostgreSQL and MongoDB can handle more transactions per second (TPS).

- Lower Costs: Faster I/O means you can often get the same performance from fewer compute instances.

- Improve User Experience: Low latency improves the performance of end-user applications that rely on real-time data retrieval.

How Does NVMe Improve Cloud Block Storage Performance?

NVMe® isn’t just a faster drive; it’s a total reimagining of the storage protocol. Unlike legacy SAS or SATA protocols designed for spinning disks, NVMe was built specifically for high-speed flash memory.

NVMe SSDs

Legacy protocols use a single command queue. NVMe supports up to 64,000 queues, each with 64,000 commands. This allows modern multi-core CPUs to talk to storage without “bottlenecking.”

NVMe requires fewer CPU cycles per I/O, freeing up more of your cloud compute for actual application logic.

NVMe-over-Fabrics (NVMe-oF)

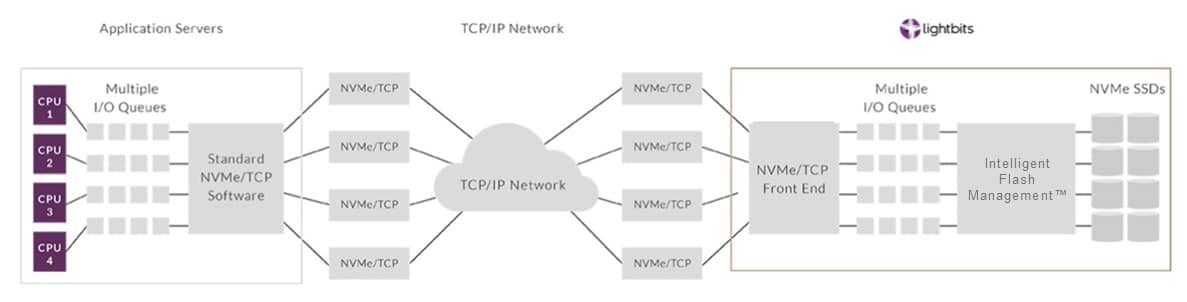

This is where Lightbits shines. It extends NVMe speeds across the network using standard TCP/IP. This means you get the performance of local flash with the flexibility and persistence of networked block storage.

NVMe/TCP

NVMe/TCP enables high-performance block storage using standard Ethernet infrastructure rather than requiring specialized Fibre Channel or InfiniBand networks. This dramatically simplifies deployment and reduces operational complexity.

Lightbits invented NVMe over TCP, which is natively integrated with LightOS, it’s the protocol of the future for high-performance data access on low-latency networking. Read the solution guide to learn more about NVMe/TCP. Built on top of the TCP/IP software stack, NVMe/TCP enables efficient, streamlined block storage optimized for today’s multi-core application servers.

How Do Cloud Block Storage Solutions Handle Replication and Failover?

Resilience in the cloud is about surviving the inevitable failure of a drive, a node, or an entire Availability Zone (AZ). Most solutions handle this via Synchronous Replication:

- An application writes data to the primary volume.

- The storage layer simultaneously writes that data to one or two replicas in different locations.

- The “Write Acknowledged” signal is sent only after all replicas are secure.

Lightbits’ Approach to Resiliency

Lightbits uses a clustering architecture that provides Elastic Data Protection. If a storage node fails, the system automatically redistributes the data and re-protects it without manual intervention. Because it uses NVMe-oF, this failover happens in seconds, often without the application even realizing there was an interruption.

Why Lightbits is the Ideal Cloud Block Storage Solution

For platform engineers and architects, Lightbits represents the next generation of software-defined storage. It bridges the gap between the high cost of native cloud volumes and the complexity of managing local NVMe.

- High Performance: Delivers millions of IOPS with consistent sub-millisecond latency.

- Cost Efficiency: No need to pay for provisioned IOPS, like the public cloud. You pay for the storage you use, and the performance is inherent to the NVMe-oF architecture.

- Simplicity: Runs on standard Ethernet; no proprietary hardware or complex Fibre Channel setups are required.

- Multi-Cloud Ready: Maintain a consistent storage architecture across AWS and Azure to avoid provider lock-in.

The Bottom Line

Building a high-performance data stack requires moving beyond the limitations of legacy block storage. By leveraging NVMe-oF and a software-defined approach, you can build an infrastructure that is faster, more resilient, and significantly more cost-effective.

Ready to evaluate your storage strategy? Consider how a disaggregated, software-defined approach could decouple your compute and storage scaling for maximum flexibility. Schedule time with a Lightbits technology expert today to run a proof-of-concept benchmark.