As organizations scale their cloud-native workloads, the “stateless” ideal of Kubernetes often clashes with the realities of performance-intensive applications. As a storage platform architect in 2026, you’re no longer asking if Kubernetes can handle stateful workloads—you’re asking how to make it as resilient and performant as your legacy SAN, without the monolithic hardware overhead.

The shift toward Software-Defined Storage has turned the “Kubernetes Storage Solutions” conversation into a strategic architectural decision for data infrastructure. This Kubernetes Storage Solutions FAQ dives into the mechanics of CSI, HA, the performance benchmarks that define enterprise-grade container storage today, and the role of modern software-defined storage platforms.

What are Kubernetes Storage Solutions?

Kubernetes storage solutions are software-defined or hardware-backed systems that provide persistent, scalable storage for containerized applications running in Kubernetes clusters. These solutions allow applications to store and retrieve data independently of the lifecycle of containers or pods.

Common categories include:

- Software-Defined Storage (SDS): Platforms that run on commodity infrastructure and deliver storage services through software. Extra value is derived from NVMe-based disaggregated SDS storage platforms–a modern architecture that separates compute and storage while maintaining low-latency performance. (e.g., Lightbits persistent storage for Kubernetes uses NVMe-over-TCP to deliver high-performance block storage while maintaining the flexibility of software-defined infrastructure.)

- Cloud-Native Storage: Storage systems designed specifically for container environments. (e.g., Longhorn)

- Cloud Managed Services: Public cloud storage integrated with Kubernetes. (e.g., Google Persistent Disk)

Look for Kubernetes storage solutions that provide:

- Persistent storage for stateful applications

- Dynamic volume provisioning

- High availability and data durability

- Integration through the Kubernetes Container Storage Interface (CSI)

What is the best storage backend for Kubernetes clusters?

| Storage Category | Key Architectural Advantage | Best For |

|---|---|---|

| Cloud-Native SDS | Optimized for NVMe-over-Fabric (NVMe/TCP); provides thin provisioning and instant snapshots without performance loss. Example: Lightbits LightOS | High-performance databases, low-latency stateful apps, and massive scale. |

| Open Source | Fully software-defined and vendor-agnostic, it creates a unified pool from local node disks. Example: Ceph | Architects seeking to avoid vendor lock-in for general-purpose workloads. |

| Managed Service | Deeply integrated into the cloud control plane; zero maintenance of the underlying hardware. Example: AWS EBS | Rapid prototyping or workloads where operational simplicity outweighs performance tuning. |

| Enterprise SAN/NAS | Leverages existing on-premise hardware via CSI drivers to bridge the gap to Kubernetes. Example: Pure Storage | Rapid prototyping or workloads where operational simplicity outweighs the need for performance tuning. |

| Local Persistent Volumes | Zero network overhead; provides the absolute maximum raw IOPS possible. | Distributed databases with their own replication (e.g., Cassandra, ScyllaDB, ElasticSearch) |

Why is Storage a Challenge for Kubernetes Workloads?

Kubernetes was originally designed for stateless workloads, where containers can be created and destroyed without retaining data. Bridging the gap to stateful workloads introduces four primary challenges:

- Ephemerality: Containers are designed to be temporary. If storage is tied directly to the container, data disappears when the container stops or is recreated.

- Portability: Kubernetes schedules pods dynamically across cluster nodes. A Pod might start on Node A and restart on Node B. Storage must follow the workload when pods move between nodes.

- Scalability: Large Kubernetes environments may run thousands of containers simultaneously. Managing storage at the scale of thousands of containers requires automated “just-in-time” provisioning. Storage provisioning must be automated and policy-driven, not manually configured.

- High-Performance: Modern workloads require predictable, low-latency and high-throughput. Architects often deploy software-defined storage or disaggregated storage platforms, such as Lightbits SDS, that can scale independently of compute while maintaining performance.

How Does Storage Work in Kubernetes?

Kubernetes uses the Container Storage Interface (CSI) to connect storage systems with containerized applications. CSI provides a standardized framework that allows storage vendors to build a driver once and integrate with multiple container orchestration environments.

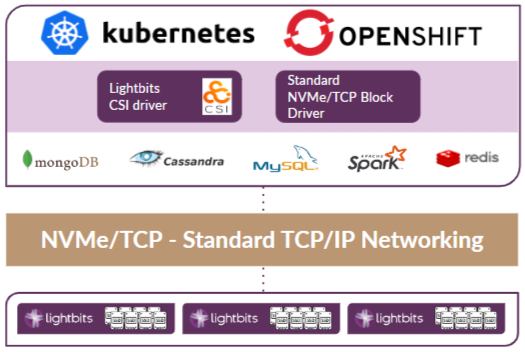

When a Pod needs storage, it doesn’t talk to the hardware directly. It interacts with the Kubernetes API, which uses a “driver” to communicate with the actual storage array (whether an SSD in the cloud or a SAN in your data center). This abstraction enables storage automation while allowing architects to integrate different storage platforms into the Kubernetes ecosystem. Solutions such as Lightbits enable Kubernetes clusters to automatically provision persistent volumes from high-performance NVMe-based storage pools. [Figure 1.]

How Do CSI Drivers Work in Kubernetes?

Think of a CSI driver like a universal adapter: Kubernetes is the device; the storage system is the power source; and the CSI driver is the adapter that makes them compatible. The CSI driver in Kubernetes is the component that enables your cluster to communicate with external storage systems in a standardized way. It acts as a bridge between Kubernetes and the storage backend, such as Lightbits block storage. An overly simplified step-by-step flow of how the CSI driver works would be this:

- Pod created → references PVC

- PVC triggers volume creation

- CSI driver provisions storage

- Pod scheduled to node

- Volume attached + mounted

- App reads/writes data

What is the Difference Between Persistent Storage and Ephemeral Storage?

Understanding this distinction is critical when designing Kubernetes infrastructure.

| Feature | Ephemeral Storage | Persistent Storage |

|---|---|---|

| Lifecycle | Tied to the lifetime of the Pod | Independent of the Pod lifecycle |

| Typical Use Cases | Temporary Data Processing, Application Caches, Log Buffering, Scratch Space for Batch Jobs | Databases, Message Queues, User Data, Analytics Platforms, AI Data Pipelines |

| Data Recovery | Data is lost if the Pod is deleted | Data remains available for the next Pod. |

Modern Kubernetes storage solutions, such as Lightbits, provide persistent block storage that automatically reconnects to new pods when workloads move.

What are a Kubernetes Persistent Volume (PV) and a Persistent Volume Claim (PVC)?

Kubernetes uses an abstraction model that separates storage consumption from storage provisioning.

- Persistent Volume (PV): A storage resource available within the cluster. It may be provisioned by an administrator or dynamically provisioned by a StorageClass. In either case, the PV represents the actual underlying storage.

- Persistent Volume Claim (PVC): A request for storage made by an application. Kubernetes matches the PVC to an appropriate Persistent Volume. A PVC specifies requirements such as capacity, access mode, and StorageClass.

Architect’s Note: By using StorageClasses, you can automate this entire process. Instead of manually creating PVs, the StorageClass acts as a “template” that creates a PV automatically whenever a user submits a PVC.

How Does Persistent Storage Improve Kubernetes Infrastructure?

Persistent storage enables Kubernetes to support enterprise-grade stateful applications. Benefits include:

- Running databases directly on Kubernetes

- Supporting high-performance workloads, such as analytics and AI

- Maintaining data durability during pod rescheduling

- Simplified storage operations

- Enabling disaggregated architectures where storage and compute scale independently

How do you ensure high availability and failover for Kubernetes storage?

For on-premises clusters, you often need an SDS layer that creates a distributed storage fabric across your worker nodes.

| Feature | How SDS Ensures HA |

|---|---|

| Synchronous Replication | Writes are not confirmed until data is stored on N different nodes. |

| Self-Healing | If a storage node goes down, the system automatically reconstructs the missing data replicas on healthy nodes. |

| Data Locality | SDS can keep a replica on the same node as the pod to reduce latency, while maintaining remote replicas for failover. |

Going with a disaggregated, software-defined model is a “gold standard” for bare-metal HA. By separating your Kubernetes nodes from your Lightbits nodes, you decouple their failure domains. If a compute node crashes, your storage remains 100% unaffected and accessible. In a disaggregated setup, your storage is pooled across a dedicated Lightbits cluster; the PVC isn’t tied to a local disk. It exists as a logical entity across the Lightbits nodes. Your Kubernetes worker nodes establish multiple TCP connections to different Lightbits storage nodes. If one network path or one storage node goes down, the Linux kernel on the worker node automatically reroutes traffic through the remaining paths. This happens in microseconds, preventing application-level IO timeouts.

As Kubernetes adoption expands beyond stateless microservices, persistent storage platforms are becoming a core component of cloud-native infrastructure design. To learn more about persistent storage for Kubernetes, read our definitive guide Persistent Storage for Containers.