Scale Your GenAI Platform with LightInferra — Faster Inference, Massive Context Windows, Shorten Time-to-Insight and Innovation

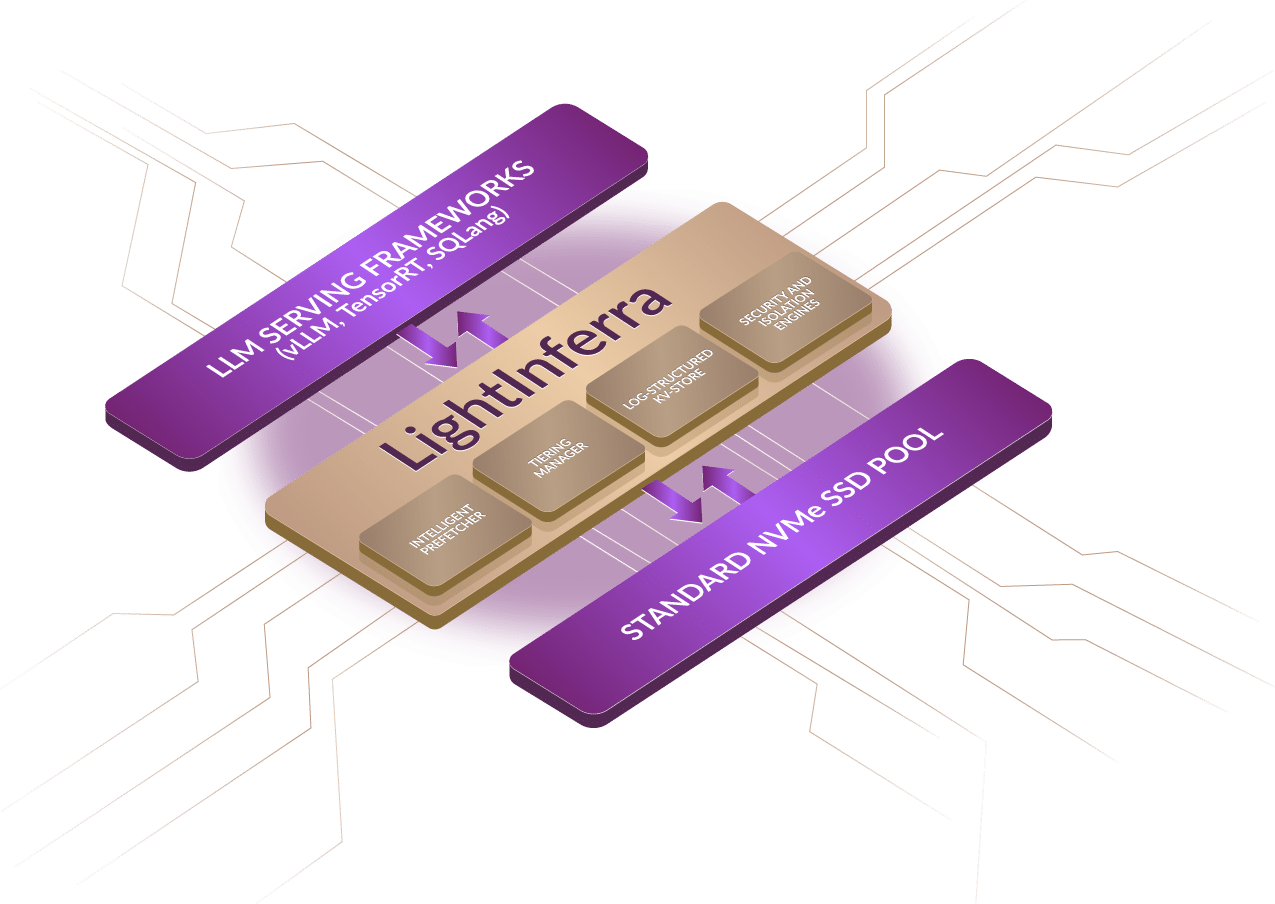

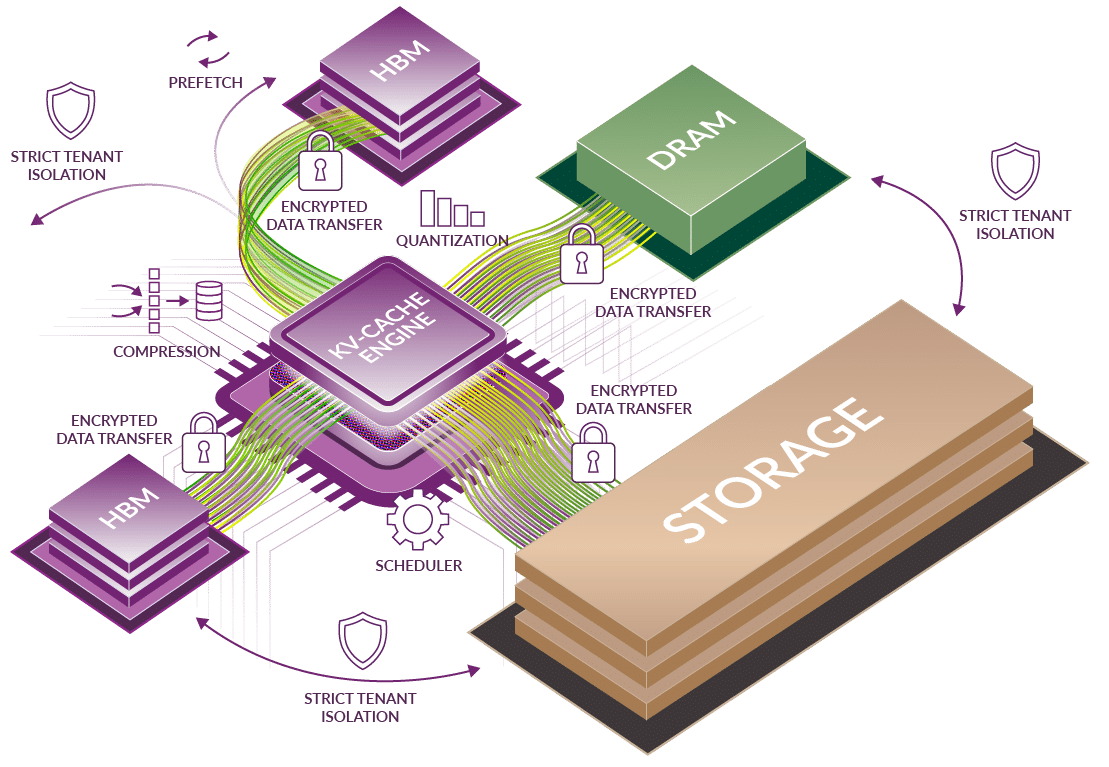

LightInferra™ is a major advancement in LLM infrastructure, delivering end-to-end optimizations across the LLM framework and KV cache. It effectively breaks the memory wall enabling practically infinite KV cache capacity through smart tiering, sophisticated pre-fetching algorithms and optimized scheduling. All of this is achieved while maintaining enterprise-grade security, including encrypted data transfer, strict and low variance SLA for QoS and Latency and agent-aware isolation and optimization of memory and context.

KV Cache Storage System Architecture