Why NVMe/TCP disaggregated storage is the natural fit for RHOSO 18

Why Did Red Hat Build Red Hat OpenStack Services on OpenShift (RHOSO)?

OpenShift — Red Hat’s Kubernetes distribution — has become the de facto enterprise container platform. The industry has consolidated around it, and most enterprise IT teams are already operating it or moving toward it. Red Hat recognized that OpenStack customers needed a clear, low-friction path to that world, without abandoning the OpenStack APIs and operational model they rely on. The result is RHOSO: OpenStack re-platformed onto OpenShift, so customers get the familiar look and feel of OpenStack running natively on the platform their organization is already standardizing on.

RHOSO also opens a compelling migration path for a much broader audience. By hosting OpenStack services as pods, organizations gain:

- Single-platform operations — one cluster to monitor, patch, and upgrade.

- Kubernetes-native lifecycle management — rolling upgrades, health checks, and self-healing for OpenStack services.

- Unified networking — OVN-based networking shared across Kubernetes workloads and OpenStack VMs.

- Reduced operational footprint — no separate undercloud, director node, or parallel lifecycle to manage.

- A swift on-ramp to OpenShift — existing OpenStack customers can migrate to RHOSO and continue operating with familiar OpenStack APIs, while progressively adopting Kubernetes-native patterns at their own pace.

- A migration bridge for VMware refugees — organizations moving away from VMware can land their VMs on RHOSO first, then migrate workloads to OpenShift Virtualization or re-platform applications directly to containers, on their own timeline.

In short, RHOSO is Red Hat’s answer to a simple question: how do you bring OpenStack customers into the Kubernetes era without asking them to throw away everything they know? The answer is: run OpenStack on OpenShift, and let customers define their own journey from there.

What is RHOSO?

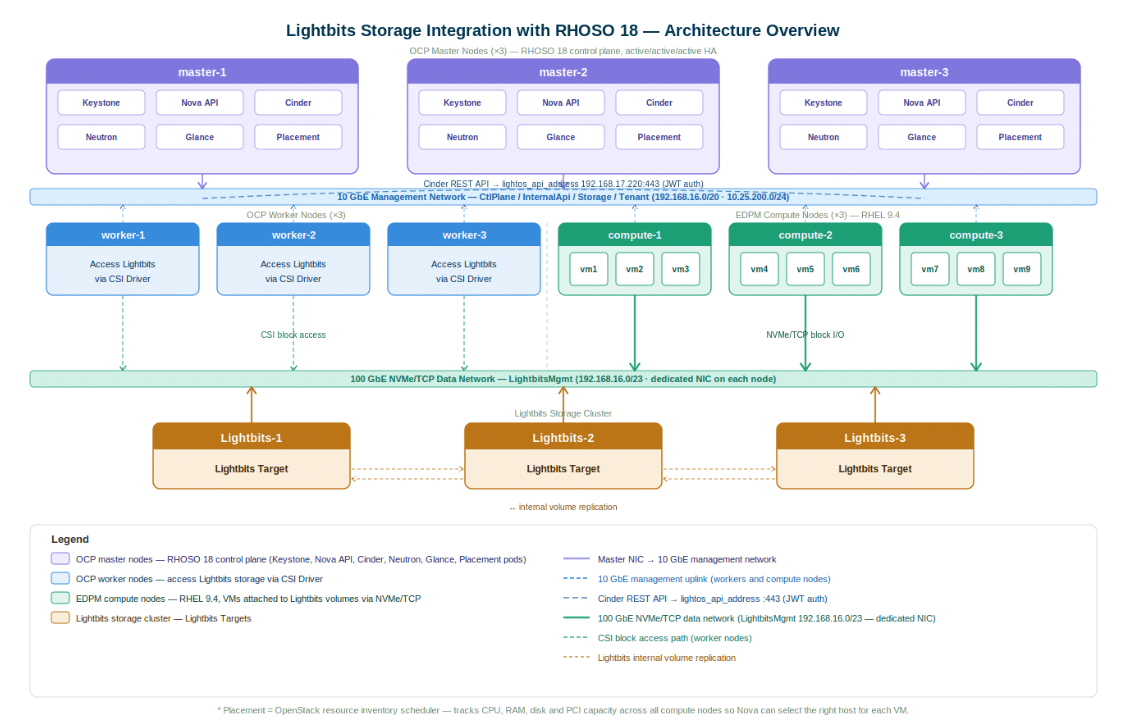

Instead of running OpenStack’s control plane services (Nova, Cinder, Neutron, Keystone, Glance, Placement) on dedicated bare-metal nodes, RHOSO runs them as Kubernetes pods on OpenShift Container Platform (OCP). The result is a unified platform where cloud infrastructure and containerized workloads share a single orchestration layer.

Compute workloads — the actual virtual machines — still run on dedicated RHEL 9.x nodes, managed by the External Data Plane Management (EDPM) operator. This separation of control and data planes gives operators the stability of bare-metal compute with the agility of Kubernetes-managed services.

Why Lightbits and NVMe/TCP for RHOSO?

RHOSO is a cloud platform — and cloud platforms are only as fast as their storage. Traditional SAN storage, NFS, or Ceph deployments introduce latency, operational complexity, or both. What RHOSO’s architecture demands is storage that matches its scale-out, disaggregated philosophy.

That’s exactly what Lightbits delivers. Lightbits is a disaggregated NVMe/TCP storage platform — purpose-built for cloud infrastructure. Rather than bundling compute and storage resources together, Lightbits allows storage capacity and performance to be scaled independently, connected over standard Ethernet using the NVMe/TCP protocol.

What is NVMe/TCP?

NVMe over TCP brings the ultra-low latency of NVMe storage (originally designed for PCIe-attached SSDs) across standard 100 GbE Ethernet — no specialized hardware or proprietary networking required. VMs on RHOSO compute nodes access Lightbits volumes with the same performance profile as locally attached NVMe, at data-center scale.

Lightbits integrates with RHOSO at two levels simultaneously:

- As the Cinder block storage backend — the Lightbits volume driver runs inside the Cinder pod on the OpenShift control plane, authenticating to the Lightbits cluster REST API via JWT and provisioning volumes on demand.

- As a direct NVMe/TCP target for compute nodes — each EDPM compute node runs the Lightbits discovery client, which manages the NVMe/TCP connection lifecycle so that nova_compute can attach and detach volumes with zero additional configuration.

This dual integration means Lightbits participates in both the control path (volume provisioning, lifecycle, snapshots) and the data path (block I/O from VMs) — seamlessly, and using standard OpenStack APIs.

The Right Storage Architecture for a Modern OpenStack

RHOSO represents Red Hat’s vision for OpenStack’s future: cloud-native, Kubernetes-managed, and operationally lean. Storage for that platform should hold the same values.

Lightbits brings disaggregation, NVMe performance, and standard Ethernet simplicity to RHOSO — without proprietary hardware, without complex storage clusters to manage separately, and without compromise on the performance that VM workloads demand. The Lightbits CSI driver also extends the same storage fabric to regular Kubernetes workloads running on the OCP worker nodes, so the entire platform — VMs and containers alike — shares one storage backend.

Whether you are migrating existing OpenStack workloads to RHOSO or building a new hybrid cloud from scratch, Lightbits provides the storage layer that scales with the platform.

Go Deeper

The full technical integration guide — including all YAML manifests for control-plane and data-plane setup, permission requirements, multi-node scaling guidance, and a complete verification playbook — is available in the companion document:

Lightbits Storage Integration with RHOSO 18 — Technical Reference Guide

For questions, reach out to your Lightbits account team.