Benchmark results from Lightbits Labs, ScaleFlux, and FarmGPU demonstrate up to 286x faster time-to-first-token in long-context inference—turning minutes of GPU stall into seconds of productive generation.

Long Context is No Longer Optional

Large language models are being deployed with context windows that were unimaginable two years ago. Agentic systems, enterprise RAG pipelines, code assistants, and multi-document reasoning workflows routinely push past 100 k tokens. Some production workloads now approach one million.

For managed inference providers, NeoCloud operators, and frontier model teams, this shift has exposed a structural bottleneck that no amount of GPU procurement alone can solve: as context grows, KV cache movement dominates performance. When cached key–value data is not available at the exact moment attention needs it, the GPU stalls. Throughput collapses. Tail latency spikes. Power is consumed without producing a single token.

Today, we are releasing benchmark results from the LightInferra™ platform using ScaleFlux NVMe SSDs, demonstrating that this bottleneck can be removed entirely.

The Results

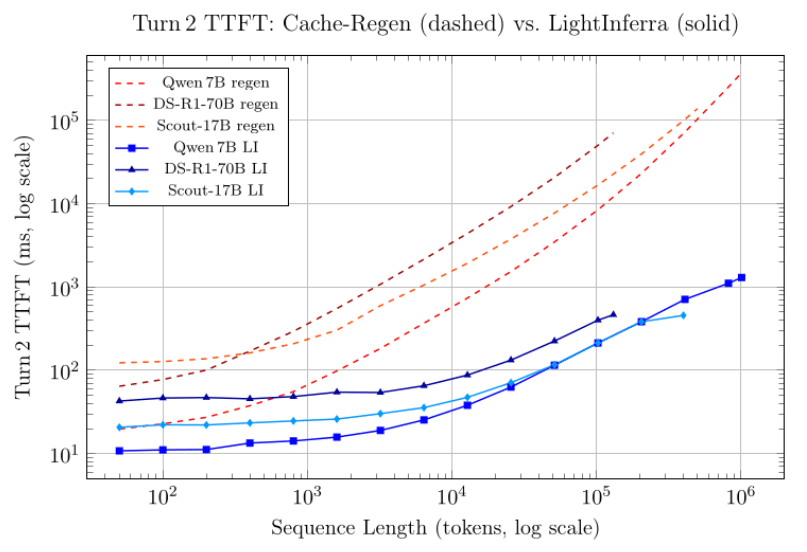

We benchmarked three models across a wide range of sequence lengths, comparing a standard cache-regen baseline (where the KV cache is recomputed from scratch on Turn 2) against the LightInferra path (where previously computed KV pages are retrieved from disaggregated storage). All tests ran on an NVIDIA DGX L40S system with 4 L40S GPUs.

The metric that matters most for production inference is Turn 2 TTFT: the time-to-first-token when a user returns to a conversation and the model must resume generating from an existing context. This is where KV reuse—or the lack of it—determines real-world responsiveness.

Headline Numbers

| Model & Sequence Length | Baseline TTFT | LightInferra TTFT | Improvement |

|---|---|---|---|

| DeepSeek-R1-70B @ 131 k tokens | 70.8 s | 465 ms | 152× |

| Qwen 2.5-7B @ 410 k tokens | 72.6 s | 711 ms | 102× |

| Qwen 2.5-7B @ 1.01 M tokens | 372.3 s | 1.3 s | 286× |

| Llama-4-Scout-17B @ 400 k tokens | 103 s | 457 ms | 226× |

At one million tokens, the baseline took over six minutes before the first token appeared. With LightInferra, the same workload began generating in roughly 1.3 seconds. For operators serving long-context requests, that difference determines whether a product tier is viable—or abandoned.

What the Data Shows

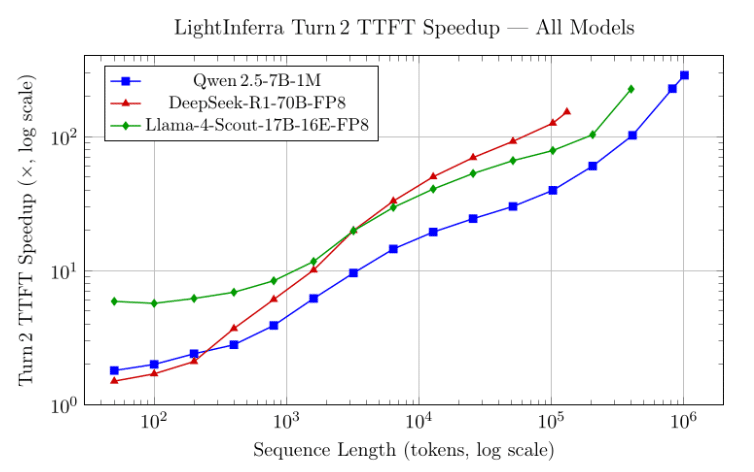

The following figures present the full picture across all three models.

The pattern is consistent across architectures and model sizes: cache-regen TTFT scales roughly linearly with sequence length, while LightInferra remains sub-linear. At 100 k tokens the gap is already one to two orders of magnitude. By one million tokens, the baseline is functionally unusable for interactive workloads.

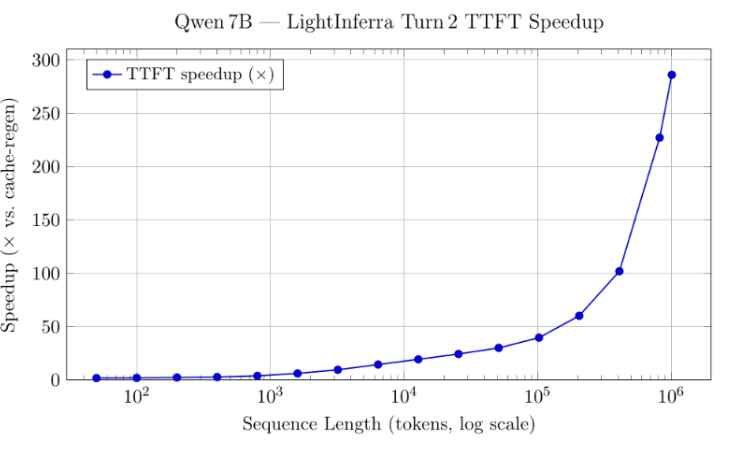

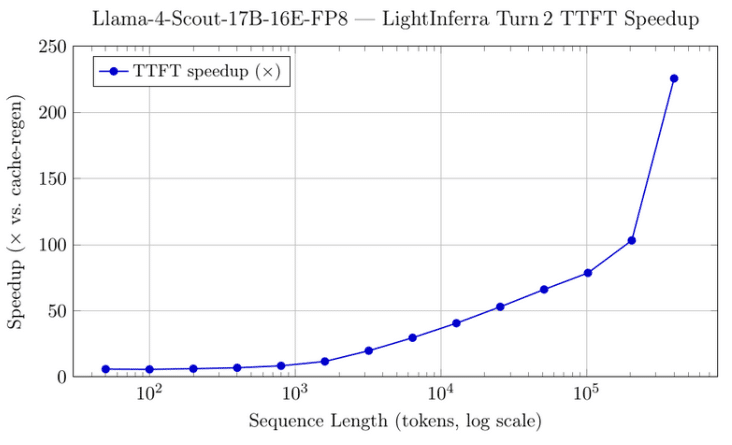

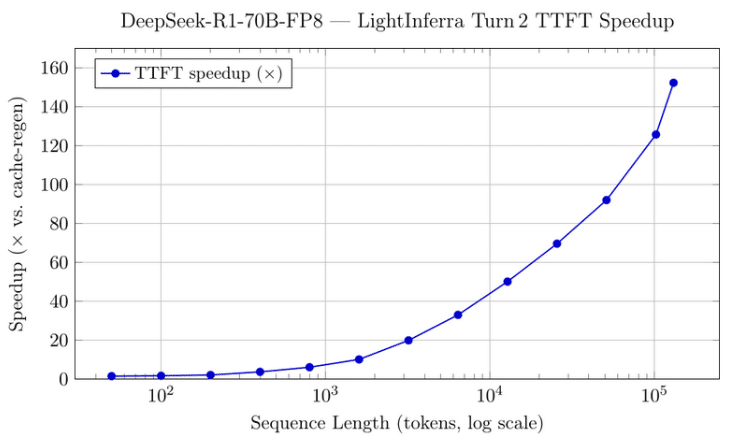

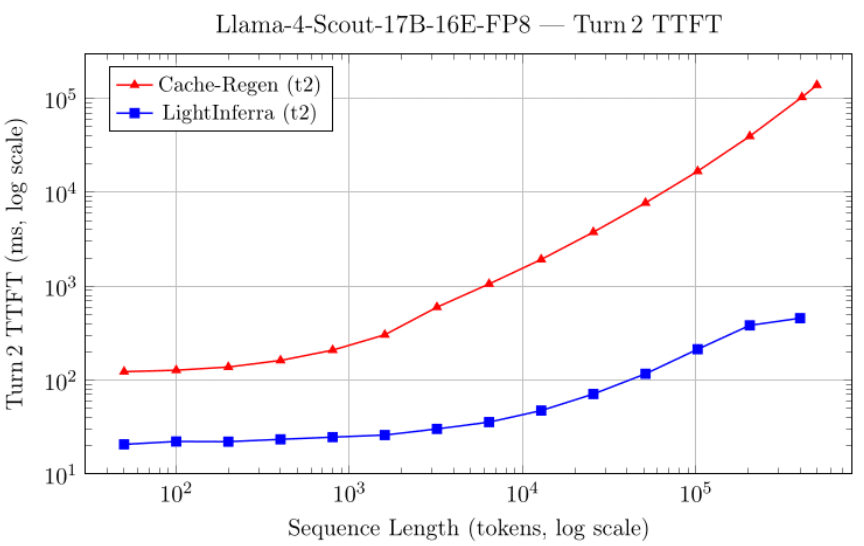

Below we show our performance improvements captured the models we measured individually.

What this Means for Inference Operators

Managed inference services and NeoCloud operators measure success in throughput within SLA. When long-context requests stall GPUs, the impact is immediate: utilization drops, concurrency suffers, QPS per GPU declines, and more infrastructure is needed to deliver the same revenue. Worse, operators who resort to KV cache compaction or eviction to cope with memory pressure risk degrading the quality of generated results.

These benchmarks show that long context no longer needs to create that collapse. By proactively ensuring the right KV blocks are ready before attention touches them, LightInferra removes the stall from the critical path. The outcome is sustained TTFT performance as context scales from 100 k tokens toward 1 M and beyond. As Johnmichael Hands of FarmGPU puts it, when a 400k-token conversation resumes and the system regenerates the entire KV cache from scratch, that is two minutes of GPU time producing zero tokens. LightInferra changes the economics completely—the same workload completes its first token in under half a second, turning a non-viable product tier into a profitable one.

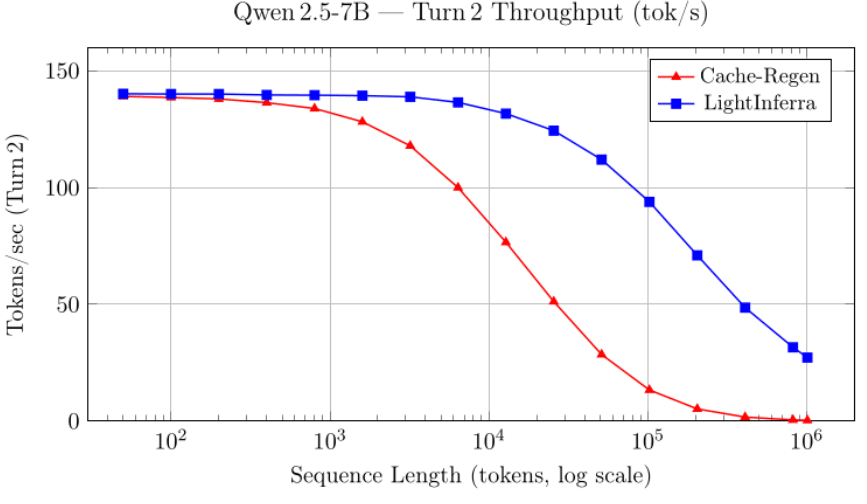

The throughput story reinforces the latency story. Figure 3 shows Turn 2 tokens per second for the Qwen 7B model. At 100 k tokens, LightInferra delivers roughly 95 tok/s while cache-regen has already dropped to around 5 tok/s—a 19× throughput advantage. The gap only widens from there: at one million tokens, LightInferra sustains 27 tok/s versus 0.3 tok/s for cache-regen, a regime where the baseline is effectively non-functional.

The Storage Layer Matters

The LightInferra benchmarks were conducted using ScaleFlux computational storage drives, which provide the NVMe bandwidth and low-latency random-read performance that Sub-Linear Attention Prefetch depends on. When KV pages are fetched from storage in microseconds rather than milliseconds, the GPU’s prefetch window closes before attention stalls. Without the right storage, these numbers are not possible. As Keith McKay of ScaleFlux explains, LLM inference does not read sequentially—it pulls scattered pages across a large address space with tight deadlines. ScaleFlux drives are designed for exactly that profile: high queue depth, low tail latency, and sustained random-read throughput. The right storage architecture eliminates the I/O penalty that makes long-context inference expensive.

Beyond speed, there is a capacity problem. Production agentic systems must be stateful—they maintain conversation histories, reasoning chains, and tool-call context across sessions. At scale, that means petabytes of KV cache data that must remain accessible at low latency. GPU memory alone cannot hold it, and local NVMe on each node does not scale far enough. This is exactly why a disaggregated storage layer is necessary: one that scales capacity and throughput independently of the GPU fleet, delivers the random-read performance that KV cache retrieval demands, and keeps every agent’s state ready to resume in milliseconds.

Model-by-Model Highlights

Qwen 2.5-7B-Instruct-1M.

The longest-context test in the suite reaches just over one million tokens. LightInferra kept Turn 2 TTFT under 1.3 seconds across the entire range, while cache-regen exceeded six minutes. At 410 k tokens the speedup was 102x; at 1 M tokens it reached 286x—throughput at 1 M: 27.2 tok/s vs. 0.3 tok/s.

DeepSeek-R1-Distill-Llama-70B-FP8.

The largest model tested, with FP8 dynamic quantization on all 4 L40S GPUs. At 131 k tokens—the model’s effective context ceiling on this hardware—LightInferra delivered 152x faster TTFT (465 ms vs. 70.8 s) and sustained 18.7 tok/s, where cache-regen managed 1.7 tok/s. The 70B model showed the steepest per-token speedup gains at overlapping sequence lengths, consistent with its larger per-token KV footprint.

Llama-4-Scout-17B-16E-FP8.

The MoE architecture produced an interesting finding: LightInferra showed a higher baseline speedup even at short sequences (~6x at 50 tokens, vs. 1.5–1.8x for the dense models), and actually exceeded cache-regen’s short-context throughput—73.9 tok/s vs. 64.0 tok/s at 50 tokens. This suggests the MoE expert-routing overhead during prefill is substantial, and LightInferra’s cache retrieval avoids it entirely. At 400 k tokens the speedup reached ~226x.

Efficiency at Scale

The headline numbers capture the impact on latency. The broader implication is infrastructure efficiency.

When GPUs are not stalled waiting for KV cache, they generate tokens continuously. That translates into higher effective utilization and more tokens per watt. Power and capital expenditure produce measurable output rather than latency. In the words of Johnmichael Hands of FarmGPU, LightInferra flattens the cost curve—a workload that used to consume two minutes of GPU time before producing a single token now takes a second. That is not an optimization; it is a different business model for long-context inference.

As context windows continue to grow toward multi-million-token sessions, infrastructure that relies on reactive KV paging—or worse, full recomputation—will face increasingly steep scaling penalties. These results demonstrate that the bottleneck can be addressed directly.

A Structural Shift in Long-Context Inference

The industry is moving toward persistent agents, large knowledge surfaces, and deeper reasoning across vast token histories. The infrastructure supporting that future must scale without collapsing under its own memory movement. As Keith McKay of ScaleFlux notes, the storage industry has spent years optimizing for training workloads—sequential writes, large block sizes, aggregate bandwidth. Inference demands a fundamentally different access pattern, and long-context inference is the most demanding variant. When the storage layer is co-designed with the inference engine—when the drive anticipates what the GPU needs—an entire class of performance cliffs disappears. That is where computational storage becomes essential, not optional.

The LightInferra platform demonstrates that long-context inference can remain performant—even at and beyond one million tokens—when KV cache readiness is engineered as a first-class concern.

For providers evaluating how to offer longer context without sacrificing stability or efficiency, these results represent more than speed. They represent a path to sustainable long-context economics.

Test Environment and Methodology

All benchmarks were conducted on an NVIDIA L40 system: 4× L40 GPUs running on FarmGPU. NVMe storage was provided by ScaleFlux.

Turn 1 is the initial prompt ingestion. Turn 2 measures TTFT when the model resumes generation with the prompt already processed—the scenario where KV cache reuse determines performance. The cache-regen baseline recomputes the full KV cache on Turn 2. The LightInferra path retrieves cached KV pages with Sub-Linear Attention Prefetch.

Models tested: Qwen/Qwen2.5-7B-Instruct-1M, neuralmagic/DeepSeek-R1-Distill-Llama-70B-FP8-dynamic, and RedHatAI/Llama-4-Scout-17B-16E-Instruct-FP8-dynamic.

Full benchmark data and the Open-KVCache API specification are available at lightbitslabs.ai.

If you operate large-scale inference fleets and want to validate these results on your own workloads, we welcome the conversation. Contact: info@lightbitslabs.com